Archive

COMP3311 Wk02

Various things about mapping ER Designs to Relational Schemas.

Mapping Strong Entities

The relational model supports only atomic attributes. To map composite attributes you can try,

- Concatenate the attributes eg. Struct name {“John”, “Smith”} –> “John Smith”

- Map atomic components of the composite attribute to a set of atomic components. eg.

- ??

Mapping N:M Relations

Mapping 1:N Relations

Mapping 1:1 Relations

Mapping 1:1 Relations

Notes from the Text Book (The Lecture Notes are a Little Different)

Domain Types & User Types

In the sample code for the first assignment to define “custom types” create domain is used. eg.

create domain PersonGender as char(1) check (value in ('M','F'));

However the text also shows create type. eg.

create type Dollars as numeric(12,2) final

It goes on to explain the difference.

- Domains can have constraints (such as not null) specified on them, as well as default values defined on the domain type. You can’t do this with user defined types.

- Domains are not strongly typed. Hence you can assign values of one domain type to values of another domain type so long as the underlying types are compatible.

Pattern Matching

Patterns in SQL can be desribed using % and _.

- Percent (%): The % character matches any substring.

- Underscore (_): The _ character matches any character.

eg.

select foo from bar where lar like '_to%'

This will match to any of these strings, “Lto” “Ato” “ltoo” “rtoto” … (any character at the start, then the “to” string, then any (even null) trailing string)

You can define the escape character for a like comparison as follows,

like 'he\%%' escape '\'' --matches all strings begining with 'he%'

You can also use not like.

SQL:1999 allows for similar too which is similar to Unix regular expressions.

Drop vs. Delete

drop table r will remove all the tuples from r, and removes the schema of r, whereas

delete from r will just remove all the tuples from r, but leaving the schema so you can still add values to the table.

References

Shepherd, John. COMP3311 09s1 Lecture Slides. http://www.cse.unsw.edu.au/~cs3311/09s1/lectures/. (Diagrams have also been sourced from here).

Silberschatz. Database System Concepts. 5th Ed.

COMP3311 – Wk01

Just a couple random notes, to reiterate some things I need to become acquainted with. Definitely not comprehensive.

The ER Diagram

Cardinalities

- Each manager manages exactly one branch, and each branch is managed by exactly one manager.

- Each branch holds zero or more accounts, but each account is held be at most one branch.

- Each customer owns zero or more accounts and each account is owned by zero or more customers.

Participation

Not all customers must take out a loan (or it is not the case that every customer takes out a loan), but every loan is taken out by at least one customer. i.e. Every loan is associated with at least one person, but every person is not necessarily associated with at least one loan.

Not all customers must take out a loan (or it is not the case that every customer takes out a loan), but every loan is taken out by at least one customer. i.e. Every loan is associated with at least one person, but every person is not necessarily associated with at least one loan.

Attributes Linked to Relationships

In (a) you know how much time a particular person spends on a project. In (b) you only know how much time has been spend on a particular project. You don’t know the distribution of that time among the researches that have worked on it.

References

Shepherd, John. COMP3311 09s1 Lecture Slides. http://www.cse.unsw.edu.au/~cs3311/09s1/lectures/. (Diagrams have also been sourced from here).

SENG4921 – Lec 02 – Moral Reasoning & Professional Ethics

Part II of Stephen Cohen’s lecture, (audio here) (2008 lecture slides here). Just as a side note, I think I’ve picked up more by listening to the audio where I can stop it to think about what was said, that I have by going to the lecture. Also it seems that the content in the two lecture doesn’t fall under the title really well. It seems some of the stuff from lecture one falls under lecture two’s title, and some of the stuff from lecture two falls under lecture ones title.

Cohen started by talking about some ethical theories. Here is a diagram based on the one we were shown.

On the left we have the group of ethical theories, consequential, that is based on the view that “Acts are right based on their consequences.” Under this umbrella there are four different views as shown.

- Egoism – “An act is right insofar as it advances my welfare.” Although nowadays people tend to contrast this with thinking about ethics.

- Utilitarianism – “Acts are right insofar they produce happiness and they are wrong insofar as they don’t produce happiness.” The wrong way to thing about utilitarianism would be “the greatest good, for the greatest number”, rather it is about maximising happiness not distributing the most. For example if I had $100 to distribute to 50 people, it may well be that giving the whole $100 to one person would produce greater happiness than the sum of happiness of 50 people each getting $2. If this were so than the utilitarian view would be to give the whole $100 to that one person.

- Nationalism – Acts are right if they are in the best interest of the nation as a whole.

- Epistemism – “Acts are right insofar as they advance our knowledge, and they are wrong if they don’t do that.“

On the right we have another view where ethics is based on non-consequential things. That is, to determine if an act is right or wrong it in fact has nothing to do with the consequences of the act. Instead it has to do with other things such as rights, duties, contracts, fairness, etc.

Or to put it as Ken Robinson and Achim Hoffman have it in their introduction article,

- Deontological in which the reasoning is based on axioms or laws, for example, “You shall not lie”

- Teleological in which the reasoning is based on outcomes.

Kant was a non-consequential thinker. His idea (how I have interpreted it) was that good will is what makes an act right, where good will is recognising your duty and then being able to make yourself do it (eg. you crash into a parked car you realise it is your duty to leave your details and then your able to make yourself to do that, even if you don’t want to do it).

Cohen then goes on to make a very good point about autonomy. The audio segment is embedded here, (or direct download)

“Kant was the person, and this has been a feature of thinking about ethics ever since Kant. A number of people think that a really important feature about ethical performance is autonomy, autonomy. Which means being free from various kinds of constraints and being able to generate something all on your own. Autonomy, I do it not because I have to, not because I’ve been trained to do it. In that respect you see you could be free …–go do whatever you want, but you’ve now been as it were brainwashed to do a certain kind of thing–. You would be free but you wouldn’t be autonomous. Autonomous is being able to come up with the principle, to generate it yourself and then make yourself do it. And that has been a very important element in thinking about ethics.” –Stephen Cohen, 2009.

Aristotle on the other hand thinks ethics is about having the right character. Imagine there’s person walking along a pier and he sees someone who’s drowning. The person with a good character (a good kind of person) does think twice, they pick up the lifefloat tube and trow it to them. This person has good ethical values as a result of their good character. Taking another approach, same situation but now someone who doesn’t have good character who doesn’t value human life. They don’t want to save this person, but they know that they ought to save them so they throw them the lifefloat to save them, despite the person not wanting to do it. This person does not have good character, but they have good ethical vales because they recognised what they ought to do, and they did it. This is Kant’s view.

Another view, sometimes called contractarism, focuses on “Keeping the terms of the contract.”

Another slide that Cohen showed was this.

Cohen makes the point that in order to have made a moral judgement you need to make a judgement, have some justification for that judgement, and also have some principle that lead you to believe that that justification was right.

judgement > justification > principle

If you don’t have this, you don’t have a moral judgement. He also says that the way you could discover that something is not a moral judgement, rather you have some bias or preference towards that judgement and it is not a moral judgement is if you don’t have that, you don’t have judgement > justification > principle.

If you are more committed to a particular judgement rather than the principle. Cohen then goes on to say that he thinks this is how we go on about moral reasoning. We try to put these two together. Sometimes we modify the judgement in light of the principle, and sometimes we modify the the principle in light of the judgement. These are exceptions to the rule (or the principle).

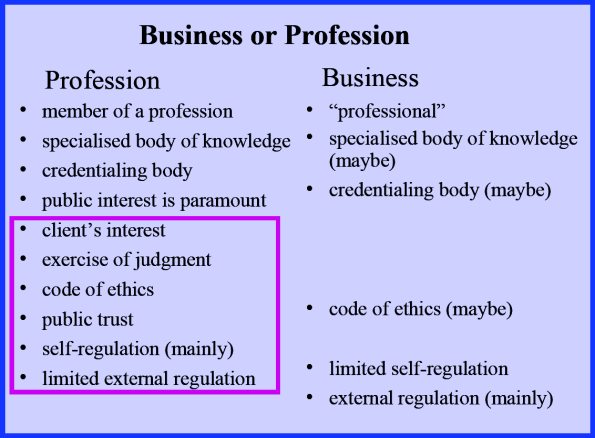

Cohen then talks about business and the profession.

The big difference between profession and business is profession have this extra bit about the public interest and the client interest. They have a duty to survey the whole landscape and do what is best. Professions and professionals must do that stuff as above. You can’t be a profession if you have some extra incentive (eg. being paid to do something against the client and public’s interest).

“A person’s having a conflict of interest is not the same thing as a person’s being affected by a conflict of interest.” People who say they don’t have a conflict of interest just because they are not affected by (or more specifically their judgement is not affected by) a conflict of interest doesn’t mean they don’t have a conflict of interest.

Two more good slides. This first one shows two organisational models, one where the top has great power, with this comes great responsibility, the other where they all have power, with this comes great trust.

The second shows some of the modern differences between a Code of Ethics and a Code of Conduct.

Any principle/value will require judgement. Say you subscribe to the principle of honesty. At some stage you will need to make a judgement based on this principle. For example (and Cohen makes the story sound more convincing than I do here) you are at home with your close friend, Bob. Bob says he is scared as some crazy person is out to get him. Someone then knocks on your door, you answer and there is a big strong man there with a knife in his hand who asks is Bob here. Honesty does not require that you say yes he is here. You make a judgement on the principle/value, and in this respect Code’s of Ethics are empowering.

Code’s of Conduct are different. They are not for introducing new values. They are there to remove judgement. They tell you exactly what to do in specific situations. One area they may deal with is gifts/bribes. They may say that you cannot accept any gift you receive over a given about. They take the heat off.

Although Cohen did not cover this in the lecture, the lecture slides from last year looked into management and leadership issues and ways to promote ethical behaviour in an organisation. I find this interesting so I’ll go over a couple of these things here.

- Leadership involves authorising and empowering others to behave ethically.

- People are more likely to behave ethically when:

- managers behave ethically

- organisational values are clear

- ethical behaviour is rewarded

- sanctions for unethical behaviour are clear

- there is practical ethics training

One last thing about morals and ethics. Back in COMP1917 Richard Buckland made a good statement he said something along the lines of come up with your own ethics and moral beliefs, and stick to them. I think this is good advice.

Also here are some notes made by the COMP1917 class which seem relevant.

- “Ethics is the way you behave when people are not around and there is a spanking new Alienware laptop sitting on the table in front of you waiting for you to steal.

- When you face ethical dilemmas at work, don’t forget you can ask other professionals from your field for advice on how to handle such situations.

- Discussed a large range of examples of ethical dilemmas and scenarios which present interesting problems:

- Hitler’s guards – Each of Hitler’s guards, when told to throw the next lot of prisoners into the gas chambers must have each thought inside that they didn’t want to do it, but nobody had the courage to say anything. Maybe if one guard refused, all the others would, then a message might get sent that what they were doing was wrong.

- Richard’s friends – was put on the spot by her boss in an interview with a client to lie about the progress of her software. In spur of the moment she lied and ever since the boss does it regularly now. She feels like she is doing the wrong thing and doesn’t know what to do.

- Richard’s advice on ethics was for each person to sit down and work out exactly what they think is ethically wrong and ethically right, and then never break that. It doesn’t matter what he thinks, in the end it’s YOUR ethics and morals that count for yourself. He said that breaking morals breaks the spirit and that we get extreme happiness from obeying our morals and doing the right thing. He also said we need to plan ahead, and attempt to predict ethical dilemmas before they arise, that way we can think about them and make a proper decision, since during the heat of the moment we will often give into temptation and fail miserably.

- Whistleblowing – extremely difficult thing to do, the company/government will attempt to discredit your character and make a mess of your image etc. If anyone feels like whistleblowing read a book called “the Whistleblowers Handbook” by Brian Martin. Richard says its a great read.”– COMP1917 08s1 Class.

References:

Cohen, Stephen. 2009s1 SENG4921 Lecture. Audio. 2008 Slides.

COMP2121 – Wk02

This week we learnt some of the things that separate assembly language from machine code in the context of AVR (or should I say AVR Studio).

Important Note 1: Assembly Language

In AVR assembly an input line takes the form of one of these, ([foo] = Optional)

[label:] directive [operands] [Comment] [label:] instruction [operands] [Comment] Comment Empty Line

where a comment has form,

; [Text]

Important Note 2: Pseudo Instructions

AVR Assembly (using AVR studio) has some additional commands that are not part of the AVR instruction set, but the assembler (that is part of AVR studio) interprets into machine code.

Pseudo instructions are recognised by their preceding ‘.’ (dot character). eg,

.equ CONST = 31

, will upon assembly go through the code and replace CONST with 31.

Here are the AVR assembly Pseudo Instructions.

| Directive | Description |

|---|---|

| BYTE | Reserve byte to a variable |

| CSEG | Code Segment |

| CSEGSIZE | Program memory size |

| DB | Define constant byte(s) |

| DEF | Define a symbolic name on a register |

| DEVICE | Define which device to assemble for |

| DSEG | Data Segment |

| DW | Define Constant word(s) |

| ENDM, ENDMACRO | End macro |

| EQU | Set a symbol equal to an expression |

| ESEG | EEPROM Segment |

| EXIT | Exit from file |

| INCLUDE | Read source from another file |

| LIST | Turn listfile generation on |

| LISTMAC | Turn Macro expansion in list file on |

| MACRO | Begin macro |

| NOLIST | Turn listfile generation off |

| ORG | Set program origin |

| SET | Set a symbol to an expression |

.byte – Reserve some space (only allowed in dseg). eg.

.dseg

var1: .byte 4 ;reserve 4 bytes and store address in var1

.CSEG

ldi r30, low(var1) ; Load address into Z low register

ldi r31, high(var1) ; Load address into Z high register

ld r1, Z ; Load VAR1 into register 1

…and some more…

.def FOO=r30 ;give register 30 the symbolic name FOO

.equ var = 2 ;like C's #define statement

.set var = 2 ;like a global variable in C

Important Note 3: Segments

There AVR three segments of an AVR assembly program. Also when writing an assembly program you need to be able to specify which segment you are referring to, so you need to declare this using an AVR assembler directive shown in brackets below.

- Code Segment (.cseg)

- Data Segment (.dseg)

- EEPROM Segment (.eseg)

“The CSEG directive defines the start of a Code Segment. An Assembler file can consist of several Code Segments, which are concatenated into one Code Segment when assembled. The BYTE directive can not be used within a Code Segment. The default segment type is Code. The Code Segments have their own location counter which is a word counter. The ORG directive can be used to place code and constants at specific locations in the Program memory. The directive does not take any parameters.” [1]

Notes from the Lab

Assembly Code and Machine Code

In the lab we looked at this AVR assembly program,

.include "m64def.inc" .def a =r16 ; define a to be register r16 .def b =r17 .def c =r18 .def d =r19 .def e =r20 main: ; main is a label ldi a, 10 ; load 10 into r16 ldi b, -20 mov c, a ; copy the value of r16 into r18 add c, b ; add r18 and r17 and store the result in r18 mov d, a sub d, b ; subtract r17 from r19 and store the result in r19 lsl c ; refer to AVR Instruction Set for the semantics of asr d ; lsl and asr mov e, c add e, d loop: inc r21 ; increase the value of r21 by 1 rjmp loop ; jump to loop

The machine code executable produced by AVR studio was 24 bytes long, the question was why. It’s actually quite simple, all of the here instructions are 1 word (2 bytes = 16 bits), there are 12 instruction so total 24 bytes. One thing that initially confused me was the weird encoding. Back in 1917 I got the impression that if you have something like mov r16 r17 that this would be represented in machine code as some number for the mov operation followed by two more numbers of the same bit size for the registers. However this can vary depending on the architecture.

For example the mov operation, MOV Rd, Rr has encoding 0010 11rd dddd rrrr. The instruction takes up 6 bits, the source register takes up 5 bits and the destination takes up 5 bits (total 16 bits). The reason that the source and destination bits are intertwined is that it makes things easier on the hardware implementation to do it this way.

The program above has machine code (in hexadecimal),

E00A EE1C 2F20 0F21 2F30 1B31 0F22 9535 2F42 0F43 5993 CFFE

and annotated,

+00000000: E00A LDI R16,0x0A Load immediate +00000001: EE1C LDI R17,0xEC Load immediate +00000002: 2F20 MOV R18,R16 Copy register +00000003: 0F21 ADD R18,R17 Add without carry +00000004: 2F30 MOV R19,R16 Copy register +00000005: 1B31 SUB R19,R17 Subtract without carry +00000006: 0F22 LSL R18 Logical Shift Left +00000007: 9535 ASR R19 Arithmetic shift right +00000008: 2F42 MOV R20,R18 Copy register +00000009: 0F43 ADD R20,R19 Add without carry +0000000A: 9553 INC R21 Increment +0000000B: CFFE RJMP PC-0x0001 Relative jump

Status Register

Stepping through this program also shows a few of the status registers in use. The Status register, like all the other registers has 8 bits, namely,

| SREG | Status Register |

|---|---|

| C | Carry Flag |

| Z | Zero Flag |

| N | Negative Flag |

| V | Two’s complement overflow indicator |

| S | N ⊕ V, For signed tests |

| H | Half Carry Flag |

| T | Transfer bit used by BLD and BST instructions |

| I | Global Interrupt Enable/Disable Flag |

Last week we saw how signed and unsigned numbers are stored, so if you look at the program above you will see that the last part just increments a register infinitely over and over. Stepping through this shows us what the status register does as we do this. Remember that AVR does all arithmetic in two’s compliment.

| 0 | H Z |

| 1-127 | H |

| 128 | H V N |

| 129-255 | H S N |

As you can see the values that are negative under the two’s complement 128-255 have the N (negative) flag. Also from 127 then to 128 under two’s compliment we have gone from 127 to -128, so the V (Two’s complement overflow indicator) flag is indicated. 0 has the zero flag.

The Rest

The lecture notes go over some of the AVR instructions but I think the docs provided by Atmel are fine. What I do think needs explaining (and me learning) is the carry flag and the difference between operations like add without carry (ADD) and add with carry (ADC).

Here is how I understand the carry bit. Say we have 4 bit registers and try to add (in binary) 1000 and 1000, the answer is 10000 however this is 5 bits and we only have 4 bits available to store the result. An overflow has occurred. To introduce some terminology, the most significant bit (or byte) (msb) is the left most bit (or byte) in big-endian (right most in little-endian), and vice-versa for least significant bit (or byte) (lsb). The carry bit (C flag) is the carry from the msb. This can indicate overflow in some cases but not always, it those cases the V flag (Two’s complement overflow indicator) is set.

So getting back to ADD and ADC, ADD will just add the two numbers ignoring the carry bit and ignoring overflow whereas ADC will actually add the carry bit to the result. At least this is what I’ve observed, though I’m not 100% sure on this.

References

[1] AVR Assembler User Guide. http://www.atmel.com/atmel/acrobat/doc1022.pdf

Bibliography

AVR Assembler User Guide. http://www.atmel.com/atmel/acrobat/doc1022.pdf

MATH1081 and MATH1231 Cheat Sheets

Just cross posting my cheat sheets from MATH1081 and MATH1231. PDF and LaTeX source (WordPress.com won’t allow .tex uploads) are provided. These are from 08s2, at UNSW. I’ve released them into the public domain, if that is not legally possibly in Australia then lobby your parliament representative.

MATH1081 LaTeX Source

%This work is hereby released into the Public Domain. To view a copy of the public domain dedication, visit http://creativecommons.org/licenses/publicdomain/ or send a letter to Creative Commons, 171 Second Street, Suite 300, San Francisco, California, 94105, USA.

\documentclass[a4paper,10pt]{article}

\usepackage{verbatim}

\usepackage{amsmath}

\usepackage{amssymb}

\setlength\parindent{0mm}

\usepackage{fullpage}

\usepackage{array}

\usepackage[all]{xy}

\usepackage[pdftex,

pdfauthor={Andrew Harvey},

pdftitle={MATH1081 Cheat sheet 2008},

pdfsubject={},

pdfkeywords={}]{hyperref}

\begin{document}

\section*{Enumeration}

\subsection*{Counting Methods}

Let $\#(n)$ denote the number of ways of doing $n$ things. Then,

$$\#(A\; \mbox{and}\; B) = \#(A) \times \#(B)$$

$$\#(A\; \mbox{or}\; B) = \#(A) + \#(B)$$

($n$ items, $r$ choices)\\

Ordered selection with repetition, $n^r$.\\

Ordered selection without repetition, $P(n,r) = \frac{n!}{(n-r)!}$.\\

Unordered selection without repetition, $C(n,r) = \frac{P(n,r)}{r!}$.\\

$|A \cup B| = |A| + |B| - |A \cap B|$\\

Ordered selection with repeition; WOOLLOOMOOLOO problem.\\

Unordered selection with repetition; dots and lines,

$$\binom{n+r-1}{n-1}$$

Pigeonhole principle. If you have n holes and more than n objects, then there must be at least 1 hole with more than 1 object.

\section*{Recurrences}

\subsection*{Formal Languages}

$\lambda$ represents the \textit{empty word}.

$w$ is just a variable (it is not part of the language)

\subsection*{First Order Homogeneous Case}

The recurrence,\\

\begin{center}$a_n = ra_{n-1}$ with $a_0=A$\\\end{center}

has solution\\

$$a_n=Ar^n.$$

\subsection*{Second Order Recurrences}

$$a_n + pa_{n-1} + qa_{n-2} = 0$$

has characteristic,

$$r^2 + pr + q = 0$$

If $\alpha$ and $\beta$ are the solutions to the characteristic equation, and if they are real and $\alpha \ne \beta$ then,

$$a_n = A\alpha^n + B\beta^n.$$

If $\alpha = \beta$ then,

$$a_n = A\alpha^n + Bn\beta^n.$$

\subsection*{Guesses for a particular solution}

\begin{tabular}{c|c}%{m{width} | m{width}}

LHS & Guess \\ \hline

$3$ & c \\

$3n$ & $cn + d$\\

$3\times 2^n$ & $c2^n$\\

$3n2^2$ & $(cn+d)2^n$\\

$(-3)^n$ & $c(-3)^n$\\

\end{tabular}

\end{document}

MATH1231-ALG LaTeX Source

%This work is hereby released into the Public Domain. To view a copy of the public domain dedication, visit http://creativecommons.org/licenses/publicdomain/ or send a letter to Creative Commons, 171 Second Street, Suite 300, San Francisco, California, 94105, USA.

\documentclass[a4paper,10pt]{article}

\usepackage{verbatim}

\usepackage{amsmath}

\usepackage{amssymb}

\setlength\parindent{0mm}

\usepackage{fullpage}

\usepackage[pdftex,

pdfauthor={Andrew Harvey},

pdftitle={MATH1231 Algebra Cheat sheet 2008},

pdfsubject={},

pdfkeywords={},

pdfstartview=FitB]{hyperref}

\begin{document}

\section*{Vector Spaces}

\subsection*{Vector Spaces}

Vector Space - 10 rules to satisfy, including \begin{small}\textit{(Where $V$ is a vector space.)}\end{small}

\begin{itemize}

\item Closure under addition. If $\mathbf{u}, \mathbf{v} \in V$ then $\mathbf{u} + \mathbf{v} \in V$

\item Existence of zero. $\mathbf{0} \in V$

\item Closure under scalar multiplication. If $\mathbf{u} \in V$ then $\lambda \mathbf{u} \in V$, where $\lambda \in \mathbb{R}$

\end{itemize}

\subsection*{Subspaces}

Subspace Theorem:\\

A subset $S$ of a vectorspace $V$ is a subspace if:

\begin{enumerate}

\renewcommand{\labelenumi}{\roman{enumi})}

\item $S$ contains the zero vector.

\item If $\mathbf{u}, \mathbf{v} \in S$ then $\mathbf{u} + \mathbf{v} \in S$ and $\lambda \mathbf{u} \in S$ for all scalars $\lambda$.

\end{enumerate}

\subsection*{Column Space}

The column space of a matrix $A$ is defined as the span of the columns of $A$, written $\mbox{col}(A)$.

\subsection*{Linear Independence}

Let $S = \{\mathbf{v_1}, \mathbf{v_2}, \dots, \mathbf{v_n}\}$ be a set of vectors.

\begin{enumerate}

\renewcommand{\labelenumi}{\roman{enumi})}

\item If we can find scalars $\alpha_1 + \alpha_2 + \dots + \alpha_n$ not all zero such that

\begin{center}$\alpha_1\mathbf{v_1} + \alpha_2\mathbf{v_2} + \dots + \alpha_n\mathbf{v_n} = 0$\end{center}

then we call $S$ a linearly dependent set and that the vectors in $S$ are linearly dependent.

\item If the only solution of

\begin{center}$\alpha_1\mathbf{v_1} + \alpha_2\mathbf{v_2} + \dots + \alpha_n\mathbf{v_n} = 0$\end{center}

is $\alpha_1 = \alpha_2 = \dots = \alpha_n = 0$ then $S$ is called a linearly independent set and that the vectors in $S$ are linearly independent.

\end{enumerate}

\subsection*{Basis}

A set $B$ of vectors in a vectorspace $V$ is called a basis if

\begin{enumerate}

\renewcommand{\labelenumi}{\roman{enumi})}

\item $B$ is linearly independent, and

\item $V = \mbox{span}(B)$.

\end{enumerate}

An orthonormal basis is formed where all the basis vectors are unit length and are mutually orthogonal (the dot product of any two is zero).

\subsection*{Dimension}

The dimension of a vectorspace $V$, written dim($V$) is the number of basis vectors.

\section*{Linear Transformations}

\subsection*{Linear Map}

A function $T$ which maps from a vectorspace $V$ to a vectorspace $W$ is said to be linear if, for all vectors $\mathbf{u}, \mathbf{v} \in V$ and for any scalar $\lambda$,

\begin{enumerate}

\renewcommand{\labelenumi}{\roman{enumi})}

\item $T(\mathbf{u} + \mathbf{v}) = T(\mathbf{u}) + T(\mathbf{v})$,

\item $T(\lambda\mathbf{u}) = \lambda T(\mathbf{u})$.

\end{enumerate}

The columns of the transformation matrix are simply the images of the standard basis vectors.

\subsection*{The Kernel}

$\mbox{im}(T) = \mbox{col}(A)$\\

The kernel of a linear map $T : V \to W$, written ker($T$), consists of the set of vectors $\mathbf{v} \in V$ such that $T(\mathbf{v}) = \mathbf{0}$.\\

If A is the matrix representation of a linear map $T$, then the kernel of $A$ is the solution set of $A\mathbf{x} = \mathbf{0}$.

\subsection*{Rank-Nullity}

The dimension of the image of a linear map $T$ is called the rank of $T$, written rank($T$). (Maximum number of linearly independent columns of A)\\

The dimension of the kernel of a linear map $T$ is called the nullity of $T$, written nullity($T$). (Number of parameters in the solution set of $A\mathbf{x}=\mathbf{0}$)\\

If $T$ is a linear map from $V$ to $W$ then

$$\mbox{rank}(T) + \mbox{nullity}(T) = \mbox{dim}(V)$$

\section*{Eigenvectors and Eigenvalues}

If $A$ is a square matrix, $\mathbf{v} \ne 0$ and $\lambda$ is a scalar such that,

$$A\mathbf{v} = \lambda\mathbf{v}$$

then $\mathbf{v}$ is an eigenvector of $A$ with eigenvalue $\lambda$.\\

\begin{comment}

\begin{equation*}

A\mathbf{v} = \lambda\mathbf{v}

\implies (A-\lambda I)\mathbf{v} = \mathbf{0}

A-\lambda I \mbox{has no inverse (otherwise } \mathbf{v} = \mathbf{0} \mbox{)}

\mbox{so set det}(A-\lambda I) = 0

\mbox{this finds the eigenvectors}

\mbox{Also since}

(A-\lambda I)\mathbf{v} = \mathbf{0}

\mathbf{v} \in \mbox{ker}(A-\lambda I)

\mbox{this gives eigenvectors.}

\end{equation*}

\end{comment}

Eigenvalues: Set $\mbox{det}(A-\lambda I) = 0$ and solve for $\lambda.$\\

Eigenvectors: For each value of $\lambda$ find the kernel of $(A-\lambda I)$.

\subsection*{Diagonalisation}

If $A$ has $n$ (independent) eigenvectors then put,\\

$P = (\mathbf{v_1}|\mathbf{v_2}|...|\mathbf{v_n})$ (eigenvectors $\mathbf{v}$)\\

and\\

$D =

\begin{pmatrix}

\lambda_1 & & 0 \\

& \ddots & \\

0 & & \lambda_n

\end{pmatrix}

$ (eigenvalues $\lambda$)\\

\\

so then\\

$A^k = PD^kP^{-1}$, for each non-negative integer $k$.\\

\\

Remember that when $A =

\bigl( \begin{smallmatrix}

a&b\\ c&d

\end{smallmatrix} \bigr)$, $A^{-1} = \frac{1}{det(A)}

\begin{pmatrix}

d & -b\\

-c & a\end{pmatrix}$

\subsection*{Systems of Differential Equations}

The system

$\begin{cases}

\frac{dx}{dt} = 4x + y\\

\frac{dy}{dt} = x + 4y

\end{cases}$

can be written $\mathbf{x}'(t) = \begin{pmatrix} 4 & 1\\1 & 4\end{pmatrix}\mathbf{x}(t)$ where $\mathbf{x}(t) = \binom{x(t)}{y(t)}.$

We can guess the solution to be $\mathbf{x}(t) = \alpha \mathbf{v} e^{\lambda t}$ (and add for all the eigenvalues). Where $\mathbf{v} $ and $ \lambda$ are the eigenvectors and eigenvalues respectively.

\section*{Probability and Statistics}

\subsection*{Probability}

Two events $A$ and $B$ are mutually exclusive if $A \cap B = \emptyset$.

$$P(A^c) = 1 - P(A)$$

$$P(A \cup B) = P(A) + P(B) - P(A \cap B)$$

\subsection*{Independence}

Two events $A$ and $B$ are physically independent of each other if the probability that one of them occurs is not influenced by the occurrence or non occurrence of the other. These two events are statistically independent if, $$P(A \cap B) = P(A).P(B).$$

\subsection*{Conditional Probability}

Probability of $A$ given $B$ is given by,

$$P(A|B) = \frac{P(A \cap B)}{P(B)}$$

\subsection*{Bayes Rule}

\subsection*{Discrete Random Variables}

$$p_k = P(X=k) \qquad \mbox{(} \{p_k\}\mbox{ is the probability distribution)}$$

where, $X$ is a discrete random variable, and $P(X=k)$ is the probability that $X=k$.\\

For $\{p_k\}$ to be a probability distribution,

\begin{enumerate}

\renewcommand{\labelenumi}{\roman{enumi})}

\item $p_k \ge 0$ for $k = 0, 1, 2, \dots$

\item $p_0 + p_1 + \dots = 1$

\end{enumerate}

\subsection*{Mean and Variance}

$E(X)$ denotes the mean or expected value of X.\\

%$$E(X) = \sum_{i=1}^{k} p_i x_i \qquad $$

$$E(X) = \sum_{\mbox{all }k} kp_k$$

$$\mbox{Var}(X) = E(X^2) - E(X)^2 \qquad \mbox{where } E(X^2) = \sum_{\mbox{all } k} k^2 p_k$$

\subsection*{Binomial Distribution}

If we perform a binomial experiment (i.e. 2 outcomes) $n$ times, and each time there is a probability $p$ of success then,

$$P(X=k) = \binom{n}{k} p^k (1-p)^{n-k},\qquad \mbox{for } 0\le k \le n \mbox{ and 0 otherwise}.$$

\subsection*{Geometric Distribution}

$$P(X=k) = p(1-p)^{k-1}, \;\; k = 1, 2, \dots$$

\end{document}

MATH1231-CACL LaTeX Source

%This work is hereby released into the Public Domain. To view a copy of the public domain dedication, visit http://creativecommons.org/licenses/publicdomain/ or send a letter to Creative Commons, 171 Second Street, Suite 300, San Francisco, California, 94105, USA.

\documentclass[a4paper,10pt]{article}

\usepackage{verbatim}

\usepackage{amsmath}

\usepackage{amssymb}

\setlength\parindent{0mm}

\usepackage{fullpage}

\usepackage[all]{xy}

\usepackage[pdftex,

pdfauthor={Andrew Harvey},

pdftitle={MATH1231 Calculus Cheat sheet 2008},

pdfsubject={},

pdfkeywords={},

pdfstartview=FitB]{hyperref}

\begin{document}

\section*{Functions of Several Variables}

\subsection*{Sketching}

\begin{itemize}

\item Level Curves

\item Sections

\end{itemize}

\subsection*{Partial Derivatives}

$$\frac{\partial^2 f}{\partial y \partial x} = \frac{\partial^2 f}{\partial x \partial y}$$

on every open set on which $f$ and the partials,\\

$$\frac{\partial f}{x}, \frac{\partial f}{y}, \frac{\partial^2 f}{\partial y \partial x}, \frac{\partial^2 f}{\partial x \partial y}$$

are continuous.

\subsection*{Normal Vector}

$$\mathbf{n} = \pm

\begin{pmatrix}

\frac{\partial f(x_0, y_0)}{\partial x}\\

\frac{\partial f(x_0, y_0)}{\partial y}\\

-1\\

\end{pmatrix}

$$

\subsection*{Error Estimation}

$$f(x_0 + \Delta x, y_0 + \Delta y) \approx f(x_0, y_0) + \left[ \frac{\partial f}{\partial x} (x_0, y_0)\right] \Delta x + \left[ \frac{\partial f}{\partial y} (x_0, y_0)\right] \Delta y$$

\subsection*{Chain Rules}

Example, $z = f(x,y)\ \mbox{with}\ x = x(t), y = y(t)$

\begin{displaymath}

\xymatrix{

& f \ar[dl] \ar[dr] & \\

x \ar[d] & & y \ar[d]\\

t & & t}

\end{displaymath}

$$\frac{df}{dt} = \frac{\partial f}{\partial x} \cdot \frac{dx}{dt} + \frac{\partial f}{\partial y} \cdot \frac{dy}{dt}$$

\section*{Integration}

\subsection*{Integration by Parts}

Integrate the chain rule,

$$u(x)v(x) = \int u'(x)v(x)\; dx + \int v'(x)u(x)\; dx$$

\subsection*{Integration of Trig Functions}

For $\int \sin^2 x\; dx$ and $\int \cos^2 x\; dx$ remember that,\\

$$\cos 2x = \cos^2 x - \sin^2 x$$

%$$\sin 2x = 2\sin x \cos x$$

Integrals of the form $\int \cos^m x \sin^n x\; dx$, when $m$ or $n$ are odd, you can factorise using $\cos^2 x + \sin^x = 1$ and then using,

$$\int \sin^k x \cos x\; dx = \frac{1}{k+1} \sin^{k+1} x + C$$

$$\int \cos^k x \sin x\; dx = \frac{-1}{k+1} \cos^{k+1} x + C$$

\subsection*{Reduction Formulae}

...

\subsection*{Partial Fractions}

Example, assume

$$\frac{2x-1}{(x+3)(x+2)^2} = \frac{A}{x+3} + \frac{Bx + C}{(x+2)^2}$$

\begin{comment}

\begin{enumerate}

\renewcommand{\labelenumi}{\roman{enumi})}

\item $\frac{2x-1}{x+2} = A + \frac{B(x+3)}{x+2}$ subs $x=-3 \to A=7$

\item $\frac{2x-1}{x+3} = \frac{A(x+2)}{x+3} + B$ subs $x=-2 \to B=-5$

\end{enumerate}

\end{comment}

Now multiply both sides by $(x+2)(x+3)$ and equate coefficients.

\section*{ODE's}

\subsection*{Separable ODE}

Separate then integrate.

\subsection*{Linear ODE}

The ODE:

$$ \frac{dy}{dx} + f(x)y=g(x) $$

has solution,

$$ y(x) = \frac{1}{u(x)}\left [ \int u(x)g(x)\ dx + C \right] $$

where,

$$ u(x) := e^{\int f(x)\ dx} $$

\subsection*{Exact ODE}

The ODE:

$$ \frac{dy}{dx} = -\frac{M(x,y)}{N(x,y)} $$

or as,

$$ M(x,y)dx + N(x,y)dy = 0 $$

is exact when,

$$ \frac{\partial M}{\partial y} = \frac{\partial N}{\partial x} $$

Assume solution is of the form,

$$ F(x,y) = c $$

with,

$$ M = \frac{\partial F}{\partial x} \qquad N = \frac{\partial F}{\partial y}$$

Integrate to find two equations equal to $F(x,y)$, then compare and find solution from assumed form.

\subsection*{Second Order ODE's}

The ODE:

$$ay'' + ay' + by = f(x)$$

For the homogeneous case ($f(x) = 0$)\\

the characteristic equation will be $a\lambda^2 + b\lambda + c = 0$

If the characteristic equation has,\\

Two Distinct Real roots, (replace the $\lambda$'s with the solutions to the characteristic eqn.)

$$ y = Ae^{\lambda x} + Be^{\lambda x}$$

Repeated Real roots,

$$ y = Ae^{\lambda x} + Bxe^{\lambda x}$$

Complex roots,

$$ y = e^{\alpha x}(A\cos \beta x + B\sin \beta x)$$

where,

$$\lambda = \alpha \pm \beta i$$

For the For the homogeneous case,

$$y = y_h + y_p$$

$$y = \mbox{solution to homogeneous case} + \mbox{particular solution}$$

Guess something that is in the same form as the RHS.\\

If $f(x) = P(x)\cos ax \mbox{(or sin)}$ a guess for $y_p$ is $Q_1(x)\cos ax + Q_2(x)\sin ax$

\section*{Taylor Series}

\subsection*{Taylor Polynomials}

For a differentiable function $f$ the Taylor polynomial of order $n$ at $x=a$ is,

$$P_n(x) = f(a) + f'(a)(x-a) + \frac{f''(a)}{2!}(x-a)^2 + \dots + \frac{f^{\left( n\right) }(a)}{n!}(x-a)^n$$

\subsection*{Taylor's Theorem}

$$f(x) = f(a) + f'(a)(x-a) + \frac{f''(a)}{2!}(x-a)^2 + \dots + \frac{f^{\left( n\right) }(a)}{n!}(x-a)^n + R_n(x)$$

where,\\

$$R_n(x) = \frac{f^{\left( n+1\right) }(c)}{(n+1)!}(x-a)^{n+1}$$

\subsection*{Sequences}

$$\lim_{x \to \infty} f(x) = L \implies \lim_{n \to \infty}a_n = L$$

essentially says that when evaluating limits functions and sequences are identical.

A sequence diverges when $\displaystyle \lim_{n \to \infty}a_n = \pm \infty$ or $\displaystyle \lim_{n \to \infty}a_n$ does not exist.

\subsection*{Infinite Series}

\subsubsection*{Telscoping Series}

Most of the terms cancel out.

\subsubsection*{$n$-th term test (shows divergence)}

$\displaystyle \sum_{n=1}^{\infty} a_n$ diverges if $\displaystyle \lim_{n \to \infty}{a_n}$ fails to exist or is non-zero.

\subsubsection*{Integral Test}

Draw a picture. Use when you can easily find the integral.

\subsubsection*{$p$- series}

The infinite series $\displaystyle \sum_{n=1}^{\infty} \frac{1}{n^p}$ converges if $p>1$ and diverges otherwise.

\subsubsection*{Comparison Test}

Compare to a p-series.

\subsubsection*{Limit form of Comparison Test}

Look at $\displaystyle \lim_{n \to \infty}{\frac{a_n}{b_n}}\;$ where $b_n$ is usually a p-series.\\

If $=c>0$, then $\sum a_n$ and $\sum b_n$ both converge or both diverge.\\

If $=0$ and $\sum b_n$ converges, then $\sum a_n$ converges.\\

If $=\infty$ and $\sum b_n$ diverges, then $\sum a_n$ diverges.

\subsubsection*{Ratio Test}

$$\lim_{n \to \infty} \frac{a_{n+1}}{a_n} = \rho$$

The series converges if $\rho < 1$,\\

the series diverges if $\rho > 1$ or $\rho$ is infinite,\\

and the test is inconclusive if $\rho = 1$.

\subsubsection*{Alternating Series Test}

The series,

$$\sum_{n=1}^{\infty} (-1)^{n+1} u_n = u_1 - u_2 + u_3 - u_4 + \dots$$

converges if,

\begin{enumerate}

\item The $u_n$'s are all $>0$,

\item $u_n \ge u_{n+1}$ for all $n \ge N$ for some integer $N$, and

\item $u_n \rightarrow 0$.

\end{enumerate}

\subsubsection*{Absolute Convergence}

If $\displaystyle \sum_{n=1}^{\infty} |a_n|$ converges, then $\displaystyle \sum_{n=1}^{\infty} a_n$ converges.

\subsection*{Taylor Series}

Taylor Polynomials consist of adding a finite number of things together, whereas Taylor Series is an infinite sum.\\

The Maclaurin series is the Taylor series at $x=0$.

\subsection*{Power Series}

\section*{More Calculus}

\subsection*{Average Value of a Function}

$$\frac{\displaystyle \int_a^b f(x)\ dx}{b-a}$$

\subsection*{Arc Length}

\begin{center}

Arc length over $\displaystyle[a,b] = \int_a^b \sqrt{1+f'(x)^2}\ dx$\\

\end{center}

$$s = \int_a^b \sqrt{x'(t)^2 + y'(t)^2}\ dt \qquad \mbox{(parametric)}$$

\subsection*{Speed}

$$\frac{ds}{dt} = \sqrt{x'(t)^2 + y'(t)^2}$$

\subsection*{Surface Area of Revolution}

$$2\pi \int_a^b f(x) \sqrt{1+f'(x)^2}\ dx$$

$$2\pi \int_a^b y(t) \sqrt{x'(t)^2 + y'(t)^2}\ dt \qquad \mbox{(parametric)}$$

\end{document}

A Letter to the Board of Studies NSW

Here is a letter I wrote to the Board of Studies (service@bos.nsw.edu.au).

Hello,

I’m not exactly sure who I should address this to, so I hope you can pass it along to the relevant person.

I am writing to ask that the Office of the Board of Studies considers licensing their syllabi and examination materials under an open content license (such as Creative Commons, GNU Free Documentation License or another open content license). Currently the Board’s course syllabi, HSC and SC examinations and Notes from the Marking Centre are licensed in a way that prevents redistribution and derivative works. The current status of the copyright licenses hiders students and teachers ability to use the syllabi and examination materials for study through sharing and collaboration of content.

For example it is to my understanding that students, teachers and anyone else cannot take all the syllabus “dot points” and annotate them with their own content, and republish this for the benefit of others. Similarly the current licence prevents use of syllabus extracts such as “dot points” for collaborative works using modern web tools (such as wiki’s).

Please note that I have published this letter on the internet (https://andrewharvey4.wordpress.com/2009/03/14/a-letter-to-the-board-of-studies-nsw). If you agree to any reply to this letter to be posted online (with credit of course), please let me know otherwise I will not publish any reply.

Thank you for you time,

Andrew Harvey

(Past HSC student, Currently University Student)

(EDIT: A related post, https://andrewharvey4.wordpress.com/2008/12/24/board-of-studies-nsw-and-their-syllabi-copyright-license/)

COMP2911 Wk 01

A few random things I’ve picked up this week that I think are noteworthy.

To my understanding,

- jre – Java Runtime Environment (of which the jvm (Java Virtual Machine) is an instance):

When you compile a Java program Java bytecode is produced, not machine code. The JRE/JVM is used to convert this Java bytecode into machine code so that the program can run. This allows Java programs to be run on whatever platform you have a JRE for. - jdk – Java Development Kit:

This is the compiler that produces Java bytecode from your Java source.

————————————–

Some indicators of Good Design,

- Don’t notice it/less obvious/doesn’t draw your attention

Some indicators of Bad Design,

- Unnecessary effort to get it to do what you want it to do

- Not fulfilling it’s purpose for its intended audience

————————————–

Eclipse and NetBeans are both IDE’s. They pretty much do the same thing. Though NetBeans is made by Sun.

————————————–

I think these two slides from Potter’s notes sum things up nicely.

An extension of this point class is, (the arrows show which variables java is refering to)

An extension of this point class is, (the arrows show which variables java is refering to)

And some sample code to use this class,

And some sample code to use this class,

Point p = null; p = new Point(1, 2); System.out.println(p.getCoord());

An old memory of Linux

I only started using Linux when I started Uni (2008). I knew nothing about it prior to this. I had heard of it but that is all. I now wish I’d become aware of it sooner but then I realised I had the chance. A friend of mine introduced me to it in year 10 (2005) and I even got an Ubuntu 4, 5 or 6 CD (I can’t recall which one). But I never used it. Why not? Well, it occurred to me that Windows was the sole reason for me not at least testing Linux out. I had had some bad experiences with some Windows operating systems and installing windows operating systems. So I had the thought “I don’t want to risk breaking my computer/loosing things again with another OS install” just to test this Linux thing out. Huge mistake! Though at least I’ve learnt from this experience and I’m trying to try things out and give them ago before I just assume them to be poorer quality or choosing not to use them.

One of the best pieces of advice that I would give is to try new things out, if you don’t try it you won’t know what your missing. For if I was always too afraid to try new things out I would still be using Windows 95.

Tales of Dvorak – Part II

A while ago I posted My Tale of the Switch to the Dvorak Layout. I’ve realised that some things have changed since then.

I just did the KTouch training session where I took 61 seconds to style 228 characters (38 words) which equals 216 characters per minute or 37 words per minute. T I had an accuracy of 97% (6 mistyped characters). Hopefully when I find the other sessions that come with KTouch I will be able to get some more data.

Mostly I can type without looking at the keyboard, hoverer as I’m still learning there are occasions when I needed to look at the keyboard. This is okay, it slows my down but its not bad. The problem is when I’m in the CSE labs I’m typing in Dvorak on a Qwerty keyboard so when I go to look down at the keyboard (as this is a habit I’ve gotten into when I get stuck) I get completely lost. And I end up pressing 5 different keys until I find the one I want.

I have a prac exam in June, so I better either hurry up and get better at Dvorak or go back to qwerty and get familiar with it again. Otherwise my prac exam may not go so well.

A few days ago I would have said that I type by remembering were specific letters are, but typing now it seems that’s not the case and my hand movements just come naturally without thinking too much. So it looks like I’m improving.

I don’t want to try tying in qwerty as that seems to really stuff things up badly for me.

SENG4921 – Lec 01 – Theoretical Underpinnings of Ethics

Today’s This week’s lecture for SENG4921 – Professional Issues and Ethics was given by Stephen Cohen of the School of History and Philosophy, UNSW. The lecture title was “Theoretical Underpinnings of Ethics”.

It was a very interesting lecture for me, and I thought it was presented very well. You can find his lecture slides from last year here, and the audio here.

This post is basically a summary of the notes I made in the lecture, so you should be able to follow though the slides as its in chronological order. I’ve mixed in my own interpretation so it may not be the case that this is exactly what Cohen said and/or meant.

———————————————

- Ethical thinking is about thinking of others interests.

- Everybody has the same ethical thinking, (and these are all important)

- Integrity

- Fairness

- Openness

- Compassion

- Honesty

Relativism is doing what the culture is doing just because everyone else is doing it. This is does not make it right. For example say you go to another country where bribery is common. This does not make it right. Nor does the line of thought “everyone else is doing it so it must be okay”.

- People are too quick to play this relative card.

———————————————

For example if you bump into a parked car and dent the other car’s bumper your thinking here is focused on answering the question “What should I do?”. However if for example you are asked to list some important moral characteristics your thinking here is focused on answering the question “What kind of person should I be?”.

These two questions are the underpinnings of ethics.

You don’t always end up doing what you think you should do.

“What kind of person should I be?” relates to a “Code of Ethics”. This is what the employees or organisation are.

“What should I do?” relates to a “Code of Conduct”. This is what the employees or organisation should do.

When we talk about ethics we mean prescriptive ethics. This is what you should or ought to do, compared to descriptive ethic which is what people do do.

———————————————

Morals and ethics are really the same.

Ethical is compared to (i.e. very different to)

- Prudential – self interest

- Political – settled by a vote (of opinion).

- Cohen used a good example, where one politician in parliament said they should have a referendum to see if the indigenous people should be given human rights. Another politician then got up and said this is non-negotiable. The indigenous people have a right to human rights, there should not be a referendum to decide this.

- Preference – want rather than should

- Artistic

———————————————

To borrow a diagram from Cohen’s slides,

Rules, consequences, professional code, law, loyalty to your employer, confidentiality, requirements of your role, etc. all factor into ethics.

Rules and consequences are requirements of morality of an individual (private morality), the other is public morality which comes into play when you occupy a role, it is mediated by the institution.

and another diagram,

I think this is the foundation of a lot of Cohen’s message.

- Obligation – You are required to do something (e.g. you have signed in agreement to act on something)

- Duty – e.g. it is your duty to treat every human with respect

- Social Responsibility – Duty without a specific target. eg. ought to do something, whether it be Clean Up Australia, supporting Youth off the streets or the Red Cross, etc.

- Good Samaritanism – Go the extra mile. eg. stop to help someone with a flat tyre.

- Minimally Decent Samaritanism – eg. if you are wearing a watch and someone asks you for the time you tell them.

- Heroism Self-sacrifice – doing more than you have to do, beyond what is expected. eg. Wistleblowing

———————————————

Cohen’s advice, “be aware of these things”.

“At work, you don’t leave your private, personal values at the door!”

“Your ethical values must be there.”

All these things often conflict, but you must make a decision. You may ask “Who’s to judge” these things. The answer is always “You, as an individual.” (from whatever perspective whether it be legal, ethical…)

I feel this is a key and important part of the lecture, and I know that this is not easy.

You have an obligation to your employer, at the same time you have your own ethics which may conflict. Would you do something you think is unethical just because you don’t want to lose your job? I don’t know what I would do…

———————————————

Cohen makes another good example. Say there was a rule that said all motor vehicles must stop at a stop sign. But what about police who are chasing a criminal. What about an ambulance. What about people rushing someone injured to the hospital. As Cohen puts it, the right thing to do in all these cases is to run the stop sign. But we will continue to find more cases. The rules cannot cover them all.

The point he is trying to make is “you can’t clear up ethics stuff with more rules.” You need to make an ethical judgement.

I agree with this. Though this sounds like a tough legal issue. Who would be the judge of the ethical judgement? What if you broke the law in order to do what you thought was ethical? Would claiming you made an ethical judgement hold up in a litigation suite? I’m guessing not.

———————————————

Another good example Cohen gave was the “dead man’s break”. As it was told State Rail implemented the dead man’s break in it’s trains, so if the driver got off the seat a break would be applied. The drivers didn’t like this so some disabled it. As a result a train crashed because of this. So what did State Rail try to then do, they tried to make a fool-proof break. This doesn’t work.

———————————————

Whistleblowing – As Cohen puts it, the whistleblower almost always suffers. Its something that you are never obligated to do.

References:

Cohen, Stephen. Theoretical Underpinnings of Ethics. 2009. SENG4921 – Professional Issues and Ethics Lecture 1.